Engineering Diplomacy Playbook

Turning a Handbook into a Living Reasoning System

When I first uploaded chapters of our recently published Open-Source Water Diplomacy Handbook into NotebookLM, my goal wasn’t efficiency. It wasn’t speed. And it certainly wasn’t automation.

It was curiosity.

I wanted to see whether an AI system could do something closer to what an engineer‑diplomat does in practice: connect principles to problems, tools to contexts, and cases to decisions without collapsing complexity into jargons or “best practices.”

What emerged was encouraging. And instructive.

What Worked and Why That Matters

The first thing NotebookLM did well was structure.

When prompted to create a Crosswalk Index, it correctly linked challenges to tools, tools to cases, and cases to scale: community, subnational, transnational. That alone is not trivial. It meant the structure mattered: chapters uploaded separately, using clear naming conventions, and an explicit source map that told the system how the pieces were meant to relate: concepts to challenges to tools to case studies.

This mirrors a generalizable lesson: thinking begins with a structure. If the thinking is sloppy, the reasoning will be too.

Another encouraging sign was restraint. NotebookLM largely resisted hallucination. It stayed grounded in the uploaded sources and cited them appropriately. In domains like water, climate, and transboundary governance, where facts are contested and uncertainty is inherent, careful synthesis of numbers and narrative matters more than a summary.

In short, the NotebookLM showed it could become a reasoning aid, not just a summarizer.

Where It Fell Short and Why That’s the Point

The first version of the Crosswalk Index also revealed a familiar problem. The NotebookLM treated chapter titles as challenges.

“Mapping the Water Diplomacy Problem Space.”

“The Role of Scientific Uncertainty.”

“Creating and Distributing Benefits.”

These are not challenges. NotebookLM mistook organizational categorization for diagnostic conditions. But challenges in water diplomacy are not heading. And how we address those challenges - misdiagnosing a complex system as simple; confusing scientific uncertainty with value-based ambiguity; applying tools at the wrong scale; and assuming better data will resolve political disagreement - has consequences.

This wasn’t a failure of the NotebookLM. It was a reflection of the way we think. We name categories instead of diagnosing conditions. We describe the box rather than opening it to see what’s broken inside.

Engineering Diplomacy is about refusing that shortcut by thinking again.

The Subtle Drift Toward “Best Practices”

A second pattern was equally revealing. The tools like Joint Fact-Finding, Modeling, and Mutual Gains Negotiation were presented as if they are generically helpful. These are reasonable and often serve as good tools.

An engineer-diplomat doesn’t ask, “Is this a good tool?” They ask: What conditions are needed for this tool to work? When does this tool fail? What happens if it’s applied at the wrong scale, or under the wrong political conditions?

The initial crosswalk read like a polished handbook summary. What it lacked was conditionality and actionability. The conditionality and actionability is the core of principled pragmatism. Tools don’t succeed because they are well designed. They succeed because the underlying conditions: scientific credibility, human empathy, and political viability make them actionable.

What Can We Do Differently that AI Can’t

The case template we are developing to link the Water Diplomacy Handbook with AquaPedia does one critical thing that current AI systems struggle to do: it suggests tools without fully exploring contextual conditions and actionability.

Take the Colorado River case. The challenge was not “drought” or “overallocation.” The diagnostic insight was more precise: fixed allocations were built on an assumption of stationary hydrology in a non-stationary climate. That diagnosis mattered because it narrowed the decision space, pointing to a specific pathway: reinterpretation through adaptive mechanisms, not wholesale renegotiation. Only then did tools like joint fact-finding and modeling become actionable.

The same logic applies at other cases. In the Sundarbans case, urgency comes from ecological thresholds and an upcoming treaty renewal. But acting quickly by reallocating water would fall into a familiar urgency–actionability trap: pressure to act outruns the conditions needed for action to work.

The template does not prescribe solutions. It disciplines sequencing: what must be understood and agreed before something can responsibly be done. Problem diagnosis first. Decision pathways second. Tools last. Reversing that order is where most well-intentioned interventions fail.

What This Experiment Taught Me About Using AI Well

The lesson here is not “AI needs better prompts.”

The deeper lesson is this: AI exposes where our own thinking defaults to abstraction instead of diagnosis.

NotebookLM did exactly what I asked. It associated chapters, tools, and cases coherently. But coherence is not the same as actionability.

To move from one to the other, I need to intervene not by adding more tools or case studies, but by sharpening associations to insights, insights to actions, and contexts to generalizations. This move from association to generalizations cannot be easily automated.

Why This Matters Beyond NotebookLM

This exercise mirrors a much larger pattern in water, climate, health, and infrastructure decision-making. We (as well as current-day AI tools) are very good at: producing frameworks, developing tools, and documenting case studies.

We are far less good at: diagnosing why similar interventions succeed in one place and fail in another, distinguishing scientific uncertainties from ambiguities of interpretations, and designing responses that are technically credible, socially acceptable and politically feasible.

That gap is precisely where Engineering Diplomacy operates.

From Static Handbook to Living System

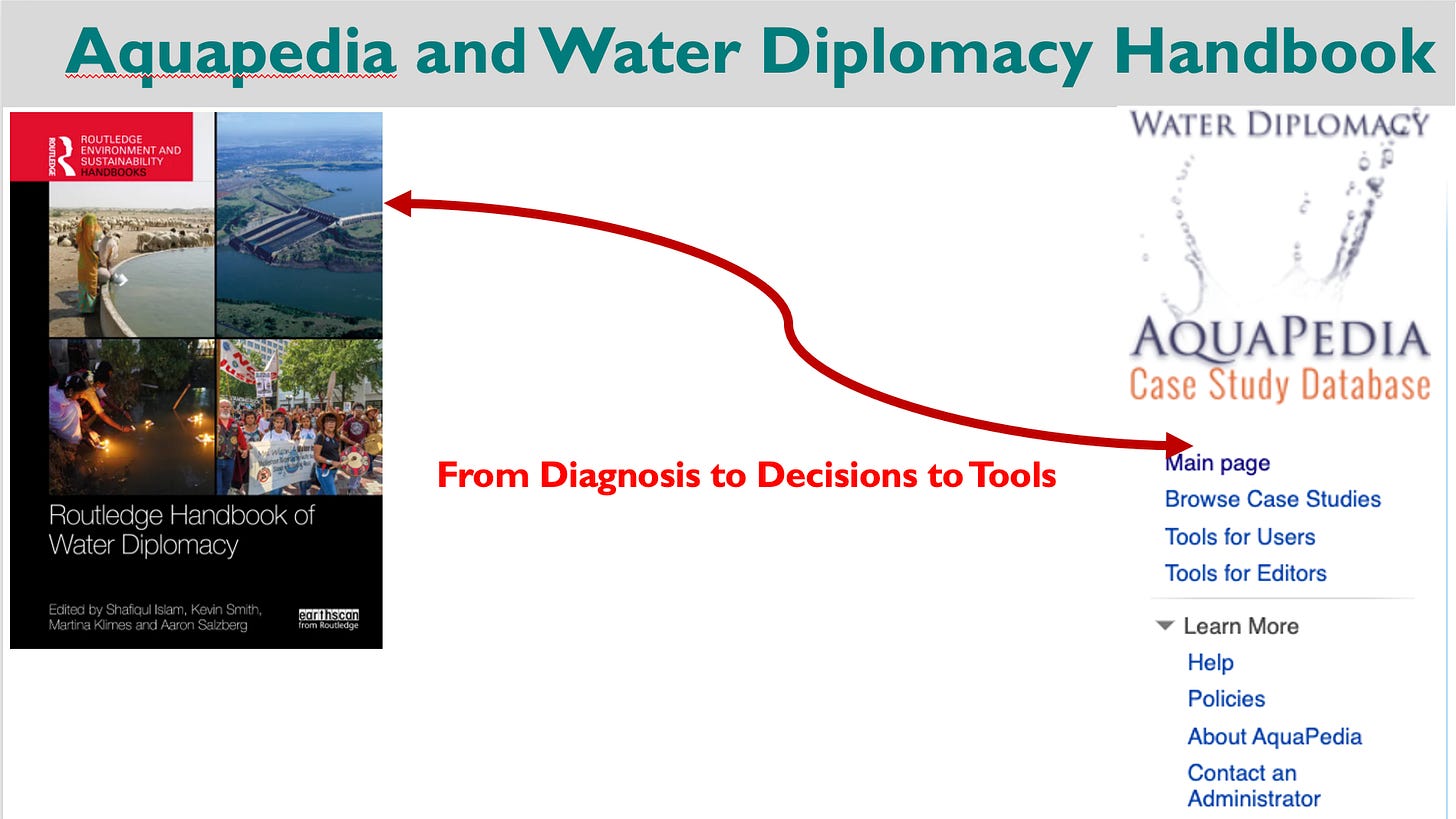

A handbook, by definition, is frozen in time. AquaPedia, by contrast, is alive with new and revised cases, new contexts, new failures, and new learning by doing.

The challenge is how to link the handbook and Aquapedia deliberately.

Use the Handbook to define the current knowledge base by acknowledging what matters and why, defining the core challenges, identifying the problem space, providing decision and negotiation tools with a focus on actionability.

Use AquaPedia as the evidence engine to show the success and failure of the knowledge base from the Handbook. AquaPedia should not be treated as a case library for inspiration. It should function as a testing ground: Where does this tool actually work? Under what conditions does it fail? What assumptions in the handbook break down in practice?

Each AquaPedia case needs to explicitly map to: one or more diagnostic challenges, the tools that were attempted, the scale at which those tools were applied, and what outcomes were anticipated and realized.

Maintain a crosswalk index as the bridge between the Handbook contents on one side and the AquaPedia case studies on the other with explicit notes on transferability and limits. Not “what goes with what,” but what works when, and why.

When AquaPedia cases contradict handbook expectations, that’s not a noise. It’s the signal.

Those contradictory signals are where learning happens. And where the next edition of the handbook needs to evolve. A living handbook is not alive because it updates itself mechanically. It’s alive because it keeps learning by doing and thinking again with humans as well as with machines by using new evidence, new contexts, and lessons that resist easy generalization.

This interaction with NotebookLM didn’t give me a recipe on how to write a cookbook. But it helped surface the issues we need to work on to keep refining the Engineering Diplomacy Playbook.

A Playbook becomes useful not when it grows longer, but when it is continuously tested against reality and helps us to change our minds and actions for desirable outcomes.